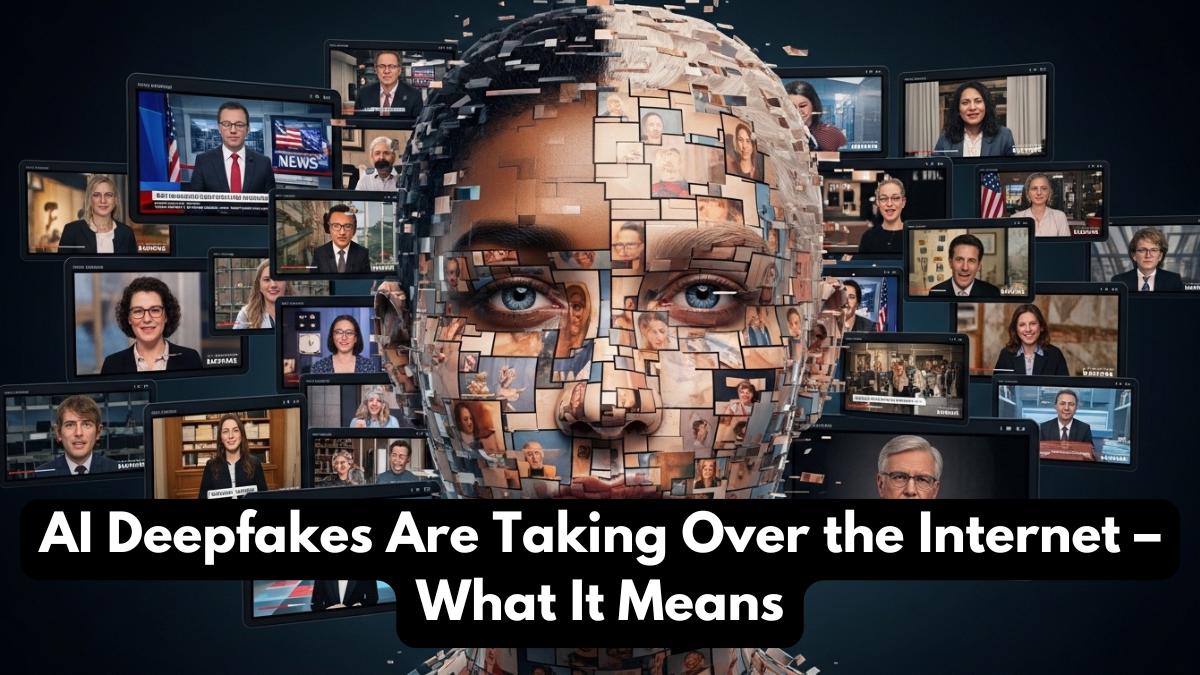

Artificial intelligence has brought remarkable innovations in entertainment, communication, and digital creativity. However, along with these advancements comes a new technological challenge that is rapidly spreading across the internet—celebrity AI deepfakes. Deepfake technology uses artificial intelligence algorithms to create highly realistic images, videos, or audio that mimic real individuals. In many cases, these digital creations can make it appear as though a public figure has said or done something that never actually happened. As this technology becomes more sophisticated, discussions about AI deepfake risks are growing around the world.

The rise of celebrity AI deepfakes has sparked debates among technology experts, legal authorities, and social media platforms. While deepfake tools can be used for creative purposes such as filmmaking and digital art, they can also be misused to spread misinformation or manipulate public opinion. Because of these concerns, understanding AI deepfake risks has become increasingly important for both internet users and policymakers.

In 2026, the conversation around celebrity AI deepfakes is expanding as more examples appear across social media and entertainment platforms. These AI-generated videos often look extremely convincing, making it difficult for viewers to distinguish between real and fake content. This growing challenge highlights why awareness about AI deepfake risks is essential in the digital age.

What Are AI Deepfakes?

Deepfakes are synthetic media created using artificial intelligence and machine learning techniques. These technologies analyze large datasets of images, videos, and audio recordings to generate new content that closely resembles real individuals. When applied to famous personalities, this technology produces celebrity AI deepfakes that can imitate a person’s face, voice, and expressions.

The technology behind celebrity AI deepfakes relies on deep learning models that study patterns in visual and audio data. By training on existing footage, the AI system can recreate a realistic digital version of a person. This process allows developers to produce videos where celebrities appear to speak or act in ways they never actually did.

While deepfake technology can have creative applications in filmmaking and entertainment, experts warn about the potential AI deepfake risks associated with misuse. When manipulated videos are shared online, they can spread misinformation or damage reputations. As a result, the rise of celebrity AI deepfakes has become a major concern in discussions about digital ethics and online safety.

How Celebrity AI Deepfakes Are Created

The process of creating celebrity AI deepfakes involves advanced artificial intelligence models trained on large amounts of visual and audio data. Developers collect images and videos of a person and use machine learning algorithms to analyze facial movements, voice patterns, and expressions.

Once the AI system learns these patterns, it can generate new content that replicates the individual’s appearance or speech. This process is often used to produce celebrity AI deepfakes that look incredibly realistic.

The typical process for generating deepfakes includes:

• Collecting video and audio data of a public figure

• Training deep learning models to analyze facial and voice patterns

• Using generative algorithms to produce new synthetic media

• Editing the final content to appear realistic

While this technology demonstrates the power of artificial intelligence, it also raises serious AI deepfake risks related to privacy, misinformation, and digital security.

Major AI Deepfake Risks

The rapid growth of celebrity AI deepfakes has introduced several challenges that affect both individuals and society. One of the biggest concerns is the spread of misinformation. When deepfake videos circulate online, they can mislead viewers into believing false narratives.

Another significant issue associated with AI deepfake risks is reputation damage. Public figures may find themselves falsely portrayed in controversial or misleading situations through manipulated media. Because celebrity AI deepfakes can appear extremely convincing, they can harm a person’s public image before the truth becomes clear.

Some of the most concerning AI deepfake risks include:

• Spreading misinformation and fake news

• Manipulating political narratives

• Damaging reputations of public figures

• Creating fraudulent or deceptive content

• Increasing cybercrime and digital fraud

These challenges demonstrate why the rise of celebrity AI deepfakes requires careful regulation and public awareness.

Comparison of Real Media vs AI Deepfakes

The following table highlights the differences between authentic media content and AI-generated deepfakes.

| Media Type | Characteristics | Reliability |

|---|---|---|

| Real Video Content | Recorded from real events and verified sources | High reliability |

| AI Deepfakes | Generated using artificial intelligence algorithms | May be misleading |

| Edited Media | Altered through traditional video editing | Moderately reliable |

This comparison illustrates why AI deepfake risks have become a serious concern. Unlike traditional editing, celebrity AI deepfakes can generate entirely synthetic media that appears authentic.

Impact on Celebrities and Public Figures

The rise of celebrity AI deepfakes has significantly impacted how public figures manage their digital presence. Actors, musicians, politicians, and influencers are increasingly vulnerable to manipulated media created using artificial intelligence.

One major concern related to AI deepfake risks is the loss of control over one’s digital identity. When deepfake videos circulate online, they can reach millions of viewers within hours. Even if the content is later proven false, the damage caused by celebrity AI deepfakes may persist.

Public figures are now working with legal experts and digital security specialists to address AI deepfake risks. Many governments and technology companies are also developing policies and tools to detect and remove manipulated content.

How Technology Companies Are Responding

Technology companies and social media platforms are increasingly aware of the challenges posed by celebrity AI deepfakes. Many organizations are developing advanced detection tools that can identify manipulated media.

Artificial intelligence itself is being used to combat AI deepfake risks. Researchers are creating algorithms capable of detecting subtle inconsistencies in synthetic videos, such as unnatural facial movements or audio mismatches.

Some measures being implemented to reduce AI deepfake risks include:

• AI-powered deepfake detection systems

• Content moderation policies on social media platforms

• Digital watermarking for authentic media

• Legal regulations against malicious deepfake creation

These initiatives aim to reduce the harmful impact of celebrity AI deepfakes and protect individuals from digital manipulation.

The Future of Deepfake Technology

As artificial intelligence continues to evolve, the technology behind celebrity AI deepfakes will likely become even more sophisticated. This development raises important questions about digital trust and the authenticity of online content.

Researchers are working on solutions to address AI deepfake risks, including authentication systems that verify the origin of digital media. These technologies could help internet users identify whether a video is real or artificially generated.

The future of digital media will likely involve a combination of innovation and regulation. While deepfake technology may continue to expand creative possibilities, addressing AI deepfake risks will remain essential for maintaining trust in online information.

Conclusion

The rapid rise of celebrity AI deepfakes highlights both the power and the challenges of modern artificial intelligence technology. While deepfake tools can create impressive digital content, they also introduce serious concerns about misinformation, privacy, and digital security.

Understanding AI deepfake risks is essential for navigating the evolving digital landscape. As these technologies become more accessible, individuals must learn how to critically evaluate online content and verify information sources.

Governments, technology companies, and researchers are actively working to address the growing impact of celebrity AI deepfakes. By developing detection systems, legal frameworks, and public awareness campaigns, society can reduce the dangers associated with AI deepfake risks while continuing to benefit from advancements in artificial intelligence.

FAQs

What are celebrity AI deepfakes?

Celebrity AI deepfakes are AI-generated videos or images that realistically mimic the appearance or voice of famous individuals.

What are the main AI deepfake risks?

Major AI deepfake risks include misinformation, reputation damage, cybercrime, and manipulation of public opinion.

How are celebrity AI deepfakes created?

Developers create celebrity AI deepfakes using machine learning algorithms trained on large datasets of images and videos.

Can AI deepfakes be detected?

Yes, researchers are developing AI-based detection tools that can identify manipulated media and reduce AI deepfake risks.

Why are AI deepfakes becoming more common?

Advancements in artificial intelligence and easier access to deep learning tools have contributed to the rise of celebrity AI deepfakes.

Click here to learn more